How does the Dolphin Network operate? A comprehensive analysis of the complete decentralized AI inference process.

The rapid development of AI models is fueling a surge in global GPU demand. As large language models (LLMs), AI Agents, and automation applications scale, traditional centralized AI cloud platforms are increasingly challenged by high costs, resource concentration, and scalability pressures. In this environment, decentralized GPU networks are emerging as a key direction for Web3 AI infrastructure.

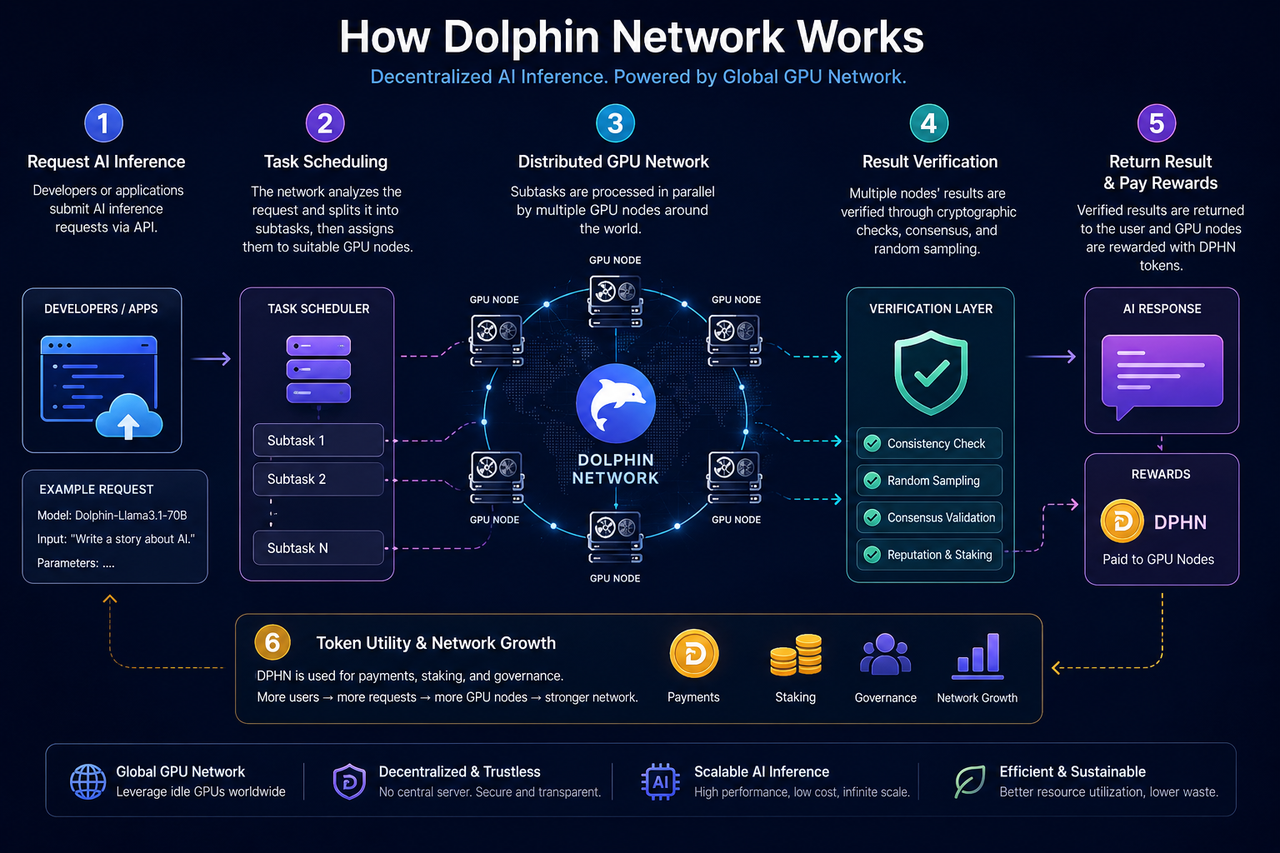

Dolphin Network is an AI inference network developed in response to this trend. Its primary goal is to aggregate globally distributed GPU resources into open AI infrastructure and to coordinate developers, GPU nodes, and the network through the POD incentive mechanism.

What Is the Core Structure of Dolphin Network?

Dolphin Network’s core architecture consists of three components: AI inference requesters, the GPU node network, and a verification-coordination mechanism.

Developers or applications can submit AI inference requests—such as text generation, chat inference, model invocation, or AI Agent tasks—to the network. The system dynamically allocates requests to appropriate nodes based on GPU node status, task requirements, and resource availability.

GPU nodes are contributed by global users. Participants can join the network with idle GPUs, run inference tasks locally, and earn token rewards based on their contributions.

To ensure result integrity, Dolphin employs both verification and economic mechanisms to coordinate node behavior, including random sampling, task review, and staking systems.

How Do AI Inference Requests Enter the Network?

When a developer interacts with Dolphin Network, requests are first routed to the task scheduling layer.

This layer analyzes task type, GPU requirements, and model resources. Different AI models may require varying memory configurations, inference speeds, and compute power, so the network dynamically matches requests to nodes based on their status.

On centralized AI cloud platforms, this process is managed by a single data center. In Dolphin, tasks are distributed across a decentralized GPU node network.

Some tasks may be split into multiple smaller inference requests to enhance overall efficiency and network concurrency.

How Do GPU Nodes Process AI Inference Tasks?

GPU nodes are the primary compute resources of Dolphin Network.

Node operators typically deploy designated software and permit the system to utilize local GPUs for AI inference tasks. When a task is assigned, the node downloads the relevant model or inference parameters and performs the computation locally.

Upon completion, the node submits inference results back to the network and awaits verification to confirm result validity. Only tasks that pass verification are eligible for token rewards.

This approach differs from traditional GPU mining. While PoW networks focus on hash computation, Dolphin’s GPU nodes execute real AI inference tasks, making it closer to an “available hash power marketplace.”

How Does Dolphin Verify AI Inference Results?

AI inference differs from standard blockchain transactions, as results usually cannot be validated by simple mathematical formulas. Dolphin therefore relies on additional mechanisms to prevent nodes from submitting incorrect results.

A common approach is random sampling—selecting tasks at random for review to confirm consistent results across multiple nodes. Persistent submission of abnormal data can reduce a node’s reputation or disqualify it from rewards.

Some decentralized AI networks also use staking. Nodes are required to stake tokens to participate, and malicious actions may result in penalties to their staked assets.

Ultimately, these economic incentives are designed to align node behavior and enhance network credibility.

How Does Dolphin Differ from Traditional AI Cloud Inference?

Traditional AI cloud platforms typically rely on large, centralized data centers—single entities control GPU clusters, model deployment, and API services.

Dolphin leverages an open GPU network architecture. GPU nodes are contributed by a global user base, enabling developers to access AI inference services in a more open environment and reducing reliance on a single provider.

Dolphin also emphasizes open AI models and resource sharing. Some networks support open-source model deployment, custom system rules, and open AI Agent scenarios.

However, distributed AI networks face challenges such as stability, network latency, and node quality variance, and thus remain in the early stages of development.

What Challenges Does Dolphin Network Face?

Decentralized AI inference networks offer openness and resource sharing but face several practical challenges.

First, GPU node performance varies widely. Differences in hardware memory, bandwidth, and inference capability can impact overall network stability.

Second, verifying AI inference results remains complex. Unlike blockchain transactions, AI outputs are probabilistic, increasing verification costs.

As AI models grow larger, efficiently scheduling large-scale GPU clusters in a distributed environment becomes a critical challenge for AI DePIN projects.

Regulatory uncertainty is also a factor. Open AI models may raise concerns around data, copyright, and content generation, so AI infrastructure networks must navigate long-term regulatory risks.

Summary

Dolphin Network is a decentralized AI inference network that combines AI and DePIN, aiming to build open AI infrastructure using global GPU nodes. The network coordinates developers and GPU nodes through task scheduling, distributed inference, random verification, and the DPHN incentive mechanism.

Compared to traditional centralized AI cloud platforms, Dolphin emphasizes openness, resource sharing, and censorship resistance, positioning it as a leading direction for Web3 AI infrastructure.

FAQs

How Does Dolphin Utilize GPU Nodes?

GPU holders can deploy nodes and contribute idle GPU resources to execute AI inference tasks and earn DPHN rewards.

What Steps Are Involved in Dolphin’s AI Inference Process?

The main stages include task submission, node scheduling, GPU inference execution, result verification, and reward distribution.

Why Is Dolphin Considered a DePIN Project?

Its core resources are real-world GPU hardware, and it coordinates distributed infrastructure through token-based incentives.

How Does Dolphin Differ from Traditional AI Cloud Platforms?

Traditional AI cloud platforms rely on centralized data centers; Dolphin uses an open GPU network to deliver distributed AI inference services.

What Is the Role of DPHN in the Network?

DPHN is used for AI inference payments, node rewards, staking, and as an economic incentive within the network.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?