How does Akash Network differ from AWS? A comparison of decentralized cloud versus traditional cloud

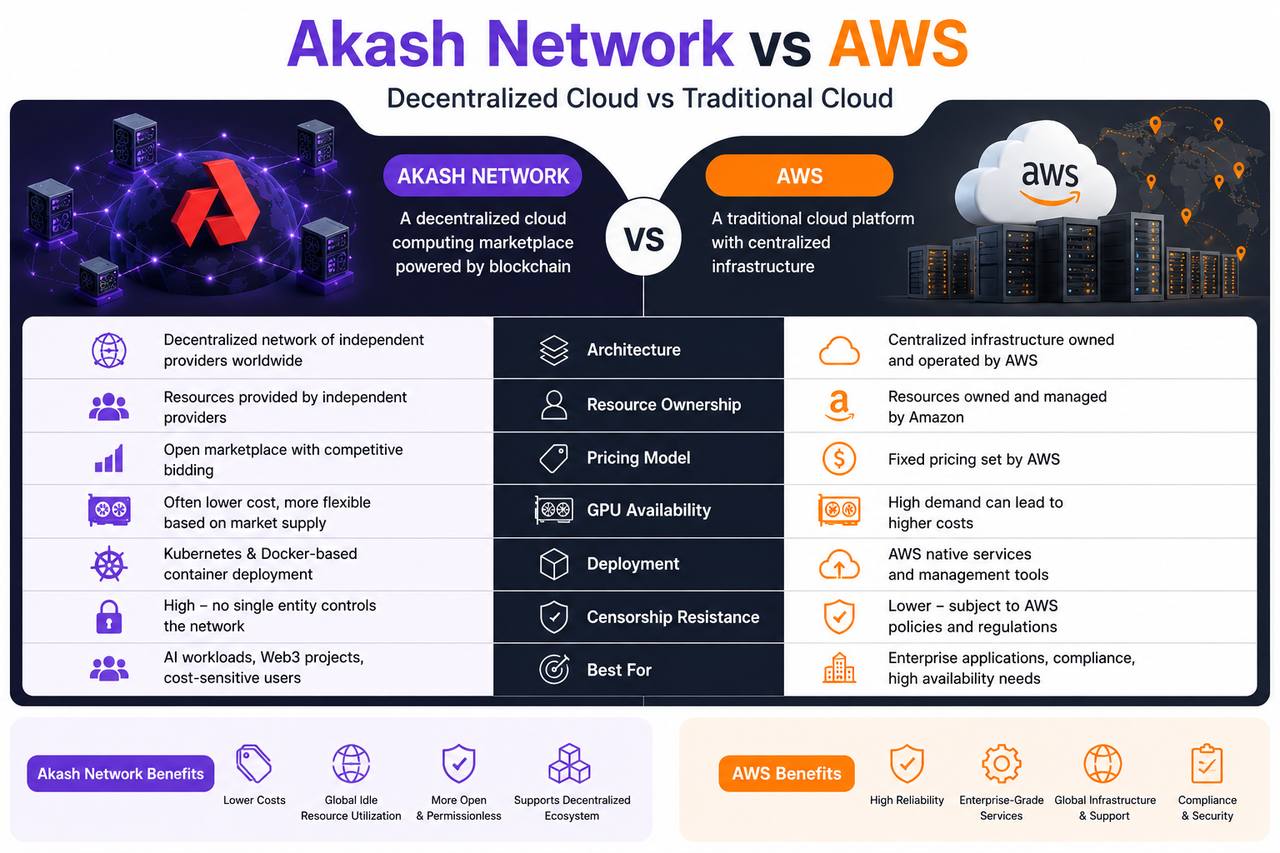

Akash Network and AWS are widely used for cloud computing and GPU resource deployment. While both platforms provide developers with servers, storage, and AI GPU resources, they differ fundamentally in how resources are organized, market structure, and operational models. AWS is a classic centralized cloud platform, whereas Akash is a blockchain-powered decentralized cloud computing network.

With the rapid rise in demand for AI model training, large language models (LLMs), and GPU inference, the cloud computing industry is seeing new trends in resource allocation. Traditional cloud platforms rely on large data centers for unified service delivery, while decentralized cloud marketplaces seek to build open GPU networks by tapping into idle global hash power.

Overview and Core Differences Between AWS and Akash Network

AWS (Amazon Web Services) is Amazon’s centralized cloud computing platform and one of the largest cloud service systems worldwide. Its core approach is for Amazon to build and operate data centers directly, then offer developers and enterprises on-demand computing resources.

Many internet platforms, AI companies, and traditional businesses depend on AWS for infrastructure services. Beyond servers and storage, AWS has developed a robust AI service ecosystem, including GPU cloud instances, machine learning platforms, database systems, and network services.

Akash Network, as a decentralized cloud computing network, aims to build an open marketplace for GPU and hash power. Unlike AWS, Akash does not own large data centers; instead, it connects global hash power providers (Provider) and developers through its blockchain network.

| Comparison Dimension | Akash Network | AWS |

|---|---|---|

| Infrastructure | Decentralized provider network | Centralized data centers |

| GPU Pricing | Market bidding | Official unified pricing |

| Resource Source | Global idle hash power | Amazon official resources |

| Deployment Model | Kubernetes + Docker | AWS cloud service ecosystem |

| Audit Capability | Relatively low | Unified platform control |

| Enterprise Support | Limited | Mature enterprise-level services |

| AI Service Ecosystem | Open deployment | Comprehensive AI tool suite |

| Web3 Compatibility | Strong | Limited |

How Do Akash and AWS Organize Resources Differently?

AWS builds its resource system on centralized data centers. The GPU, CPU, and storage resources rented by developers are sourced directly from Amazon’s managed server clusters.

Akash operates with a fundamentally different model. Its resources come from diverse global providers—including data centers, mining farms, enterprise servers, and individual GPU nodes. Akash does not directly control these resources; instead, it coordinates scheduling and settlement via blockchain-based market mechanisms.

This structural difference leads to distinct approaches to resource scaling. Traditional cloud platforms expand hash power by continuously building large data centers, while decentralized cloud marketplaces rely on the dynamic integration of idle resources worldwide.

For the AI sector, this open marketplace model boosts GPU utilization and reduces wasted hash power.

How Do Akash and AWS Differ in GPU Pricing Mechanisms?

GPU pricing is a key distinction between the two platforms.

AWS uses a unified pricing model, with GPU rental rates set by Amazon. Given persistent demand for high-end GPUs, the cost of using popular GPUs like H100 and A100 is often high.

Akash employs an open bidding mechanism. After developers post their GPU requirements, providers in the network submit bids based on their available resources. Developers then choose the most suitable provider from multiple offers to deploy their workloads.

This market-driven approach enables more flexible GPU pricing. When supply is ample, developers can often secure hash power at costs below those of traditional cloud platforms.

However, prices in decentralized markets fluctuate with supply and demand, so price stability is generally lower compared to centralized platforms.

How Do Akash and AWS Differ in AI Workload Deployment?

AWS is designed as a comprehensive enterprise-grade AI service platform.

Developers can rent GPUs and leverage official AI services like SageMaker and Bedrock for model training, inference, and deployment. AWS offers mature APIs, databases, and security frameworks, making it ideal for traditional enterprises and large AI teams.

In contrast, Akash emphasizes open infrastructure capabilities.

Developers typically deploy AI models and inference services themselves using Kubernetes and Docker. Akash functions more as an open GPU marketplace than a turnkey AI platform.

While this model increases development flexibility, it also requires developers to have experience with containerization and cloud-native operations.

For Web3-native teams, open-source AI developers, and decentralized applications, Akash’s open deployment model is often more appealing.

How Does Openness Differ Between Decentralized Cloud and Traditional Cloud?

Traditional cloud platforms are centralized, giving the provider strong control over resources, account permissions, regional restrictions, and service review mechanisms.

This structure supports enterprise compliance and risk management, but also means developers are dependent on a single platform.

Akash prioritizes open markets and anti-censorship. With resources contributed by providers around the globe, developers have greater freedom to deploy AI models, Web3 nodes, and containerized applications.

This openness is a key reason Web3 and DePIN projects focus on decentralized cloud solutions.

However, for large enterprises, centralized platforms still offer clear advantages in security auditing, data compliance, and service reliability.

How Do Developer Experiences Differ Between Akash and AWS?

AWS boasts a mature developer ecosystem and tool suite. Developers can quickly launch GPU instances, configure networks, and access AI services via the console.

Extensive official documentation, SDKs, and enterprise support reduce the learning curve for traditional development teams.

Akash is geared toward Web3 and Kubernetes-native development.

Developers must understand concepts such as Deployment, Bid, Lease, and SDL configuration, and manage container deployments independently. Compared to AWS, Akash is better suited for developers familiar with cloud-native technology and decentralized infrastructure.

This model also offers greater freedom, letting developers customize AI workloads and GPU strategies with more flexibility.

Will Decentralized Cloud Replace Traditional Cloud?

At present, decentralized cloud is more likely to supplement the traditional cloud market than fully replace it.

AWS retains clear advantages in enterprise services, global reach, and stability. For large enterprises and financial institutions, robust data security and SLAs remain critical.

Decentralized GPU marketplaces like Akash are best suited for open AI infrastructure, Web3 node deployment, and GPU cost optimization.

Summary

Akash Network and AWS both deliver GPU and cloud computing resources, but each represents a distinct trajectory for cloud computing evolution.

AWS is a conventional centralized cloud platform, building global infrastructure through large data centers and enterprise-grade services. Akash leverages an open GPU marketplace to aggregate global idle hash power, offering more flexible resource access for AI and Web3 applications.

FAQs

Why Are GPUs on Akash Usually Cheaper?

Akash uses a provider bidding model, so GPU prices are dynamically determined by market supply and demand. As a result, some GPU resources are often less expensive than those on traditional cloud platforms.

Does AWS Support AI Model Deployment?

Yes. AWS offers SageMaker, EC2 GPU instances, and a range of AI services for training and deploying AI models.

What Use Cases Are Best for Akash?

Akash is ideal for AI inference, Web3 node operation, GPU cost optimization, and open AI infrastructure scenarios.

Is Decentralized Cloud More Secure Than Traditional Cloud?

The security models differ. Traditional cloud emphasizes enterprise-grade security and compliance, while decentralized cloud focuses on openness and anti-censorship.

Will Decentralized Cloud Replace AWS?

It’s more likely to be complementary. Traditional cloud continues to dominate the enterprise market, while decentralized GPU marketplaces are becoming an important supplement for AI and Web3 infrastructure.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?